Defragged Zebra

Ubuntu repository addition and removal

Add repo:

sudo add-apt-repository ppa:doctormo/wacom-plus

Remove repo:

sudo add-apt-repository -r ppa:doctormo/wacom-plus

iDRAC password-less login – iDRAC certificate login

Summary

Enabling certificate login on iDRAC makes it possible to run commands quickly and across many servers. It can be extremely useful in many cases. This post will show how to enable certificate based login on iDRAC and how to run commands against multiple servers in sequence.

First check the users on a remote server with SSH:

jonas@hydra:~$ ssh root@192.168.1.120 root@192.168.1.120's password: /admin1-> racadm racadm>>get idrac.users racadm get idrac.users iDRAC.Users.1 [Key=iDRAC.Embedded.1#Users.1] iDRAC.Users.2 [Key=iDRAC.Embedded.1#Users.2] iDRAC.Users.3 [Key=iDRAC.Embedded.1#Users.3] iDRAC.Users.4 [Key=iDRAC.Embedded.1#Users.4] iDRAC.Users.5 [Key=iDRAC.Embedded.1#Users.5] iDRAC.Users.6 [Key=iDRAC.Embedded.1#Users.6] iDRAC.Users.7 [Key=iDRAC.Embedded.1#Users.7] iDRAC.Users.8 [Key=iDRAC.Embedded.1#Users.8] iDRAC.Users.9 [Key=iDRAC.Embedded.1#Users.9] iDRAC.Users.10 [Key=iDRAC.Embedded.1#Users.10] iDRAC.Users.11 [Key=iDRAC.Embedded.1#Users.11] iDRAC.Users.12 [Key=iDRAC.Embedded.1#Users.12] iDRAC.Users.13 [Key=iDRAC.Embedded.1#Users.13] iDRAC.Users.14 [Key=iDRAC.Embedded.1#Users.14] iDRAC.Users.15 [Key=iDRAC.Embedded.1#Users.15] iDRAC.Users.16 [Key=iDRAC.Embedded.1#Users.16]

Let’s use “User10” for this example:

racadm>>get iDRAC.Users.10 racadm get iDRAC.Users.10 [Key=iDRAC.Embedded.1#Users.10] Enable=Disabled IpmiLanPrivilege=15 MD5v3Key= !!Password=******** (Write-Only) Privilege=0x0 SHA1v3Key= SHA256Password= SHA256PasswordSalt= SNMPv3AuthenticationType=SHA SNMPv3Enable=Disabled SNMPv3PrivacyType=AES SolEnable=Disabled UserName=

Update the username, password and privilege:

racadm>>set iDRAC.Users.10.UserName jonas racadm set iDRAC.Users.10.UserName jonas [Key=iDRAC.Embedded.1#Users.10] Object value modified successfully racadm>>set iDRAC.Users.10.Password calvin racadm set iDRAC.Users.10.Password calvin [Key=iDRAC.Embedded.1#Users.10] Object value modified successfully racadm>>set iDRAC.Users.10.Privilege 0x1ff racadm set iDRAC.Users.10.Privilege 0x1ff [Key=iDRAC.Embedded.1#Users.10] Object value modified successfully racadm>>set iDRAC.Users.10.IpmiLanPrivilege 4 racadm set iDRAC.Users.10.IpmiLanPrivilege 4 [Key=iDRAC.Embedded.1#Users.10] Object value modified successfully racadm>>set iDRAC.Users.10.Enable enabled racadm set iDRAC.Users.10.Enable enabled [Key=iDRAC.Embedded.1#Users.10] Object value modified successfully racadm>>exit /admin1-> exit CLP Session terminated Connection to 192.168.1.120 closed. jonas@hydra:~$

If no key is available, generate it:

jonas@hydra:~$ ssh-keygen -t rsa Generating public/private rsa key pair. Enter file in which to save the key (/home/jonas/.ssh/id_rsa): Enter passphrase (empty for no passphrase): Enter same passphrase again: Your identification has been saved in /home/jonas/.ssh/id_rsa. Your public key has been saved in /home/jonas/.ssh/id_rsa.pub. The key fingerprint is: 43:15:av:24:2f:55:c5:5c:y5:v2:75:3e:ad:fa:f0:eb jonas@hydra The key's randomart image is: +--[ RSA 2048]----+ | | | | | | | o . | | + S . | | o + o | | . o o + . | |+.o .o . | |=o ..=B. | +-----------------+ jonas@hydra:~$

Check the key:

jonas@hydra:~$ cat ~/.ssh/id_rsa.pub ssh-rsa AAASBAASdfjsgdfnsryserhbnsfgjkdTFXNFTSDtjdRTYjsdrwsrthjsTGJsdRJGKdRTjsrtjksidHMdFgjdNsfgbCFjkdfghikdMddndRTYjdmdyikdr+EYFFTM8et+UH7uHPlC6PwWNJWn147gmN16o6JJBXzEt1MSI5Tz659lOhVO8sNomP7aV3onCS59ioED3ctdD7N4YYomVnkqHxu2SpI7B1SrXXmCi3iwY3Q3TXaYBgRc7IOG7j3P9UgNHcJ3OgFn+qcps9Dq1pXIeWDSEFwCI19T8nOjsZxLCN/DmphuwEG7J6f+q+xqhQ9t0rLwZGCmcCEi9eSnvQSjOtLwHUIJJu7RzS95PAW3qmTwem2YbtHT jonas@hydra jonas@hydra:~$

Push the key to the iDRAC:

jonas@hydra:~$ ssh jonas@192.168.1.120 "racadm sshpkauth -i 10 -k 1 -t 'ssh-rsa AAASBAASdfjsgdfnsryserhbnsfgjkdTFXNFTSDtjdRTYjsdrwsrthjsTGJsdRJGKdRTjsrtjksidHMdFgjdNsfgbCFjkdfghikdMddndRTYjdmdyikdr+EYFFTM8et+UH7uHPlC6PwWNJWn147gmN16o6JJBXzEt1MSI5Tz659lOhVO8sNomP7aV3onCS59ioED3ctdD7N4YYomVnkqHxu2SpI7B1SrXXmCi3iwY3Q3TXaYBgRc7IOG7j3P9UgNHcJ3OgFn+qcps9Dq1pXIeWDSEFwCI19T8nOjsZxLCN/DmphuwEG7J6f+q+xqhQ9t0rLwZGCmcCEi9eSnvQSjOtLwHUIJJu7RzS95PAW3qmTwem2YbtHT jonas@hydra'" jonas@192.168.1.120's password: PK SSH Authentication operation completed successfully. jonas@hydra:~$ jonas@hydra:~$

Verify that the key is installed correctly on the iDRAC:

jonas@hydra:~$ ssh jonas@192.168.1.120 "racadm sshpkauth -v -i 10 -k all" --- User 10 --- Key 1 : ssh-rsa AAASBAASdfjsgdfnsryserhbnsfgjkdTFXNFTSDtjdRTYjsdrwsrthjsTGJsdRJGKdRTjsrtjksidHMdFgjdNsfgbCFjkdfghikdMddndRTYjdmdyikdr+EYFFTM8et+UH7uHPlC6PwWNJWn147gmN16o6JJBXzEt1MSI5Tz659lOhVO8sNomP7aV3onCS59ioED3ctdD7N4YYomVnkqHxu2SpI7B1SrXXmCi3iwY3Q3TXaYBgRc7IOG7j3P9UgNHcJ3OgFn+qcps9Dq1pXIeWDSEFwCI19T8nOjsZxLCN/DmphuwEG7J6f+q+xqhQ9t0rLwZGCmcCEi9eSnvQSjOtLwHUIJJu7RzS95PAW3qmTwem2YbtHT jonas@hydra Key 2 : Key 3 : Key 4 :

That’s all

Let’s try running a few commands against servers with our key installed:

jonas@hydra:~$ for i in {131..134}; do echo -n "Server number: $i: "; ssh 192.168.1.$i "racadm serveraction powerstatus"; done

Server number: 131: Server power status: ON

Server number: 132: Server power status: ON

Server number: 133: Server power status: ON

Server number: 134: Server power status: ON

jonas@hydra:~$

jonas@hydra:~$ for i in {1..4}; do echo -n "Server number: $i: "; ssh 192.168.1.17$i "racadm storage get vdisks"; done

Server number: 1: Disk.Virtual.0:RAID.Integrated.1-1

Server number: 2: Disk.Virtual.0:RAID.Integrated.1-1

Server number: 3: Disk.Virtual.0:RAID.Integrated.1-1

Server number: 4: Disk.Virtual.0:RAID.Integrated.1-1

jonas@hydra:~$

All works well. Enjoy your iDRAC automation powers!

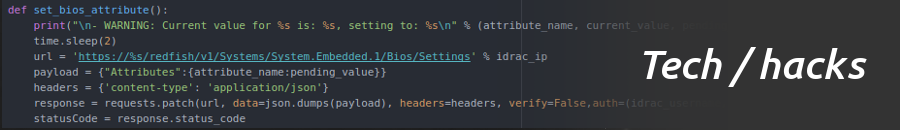

Redfish tutorial videos for beginners

I recently made a collection of videos for people to get started with Redfish on iDRAC using either PowerShell or Python. Hopefully they’ll be helpful for those starting out with the Redfish API on Dell EMC servers (or in general).

For scripts, please refer to the Dell EMC Github page here:

https://github.com/dell/iDRAC-Redfish-Scripting

Redfish with Python: Getting started with the environment

Redfish with Python: Basic scripts

Redfish with Python: Modifying server settings with SCP (Server Configuration Profiles)

Redfish with PowerShell: Setting up the environment

Redfish with PowerShell: Modifying server settings

Redfish with PowerShell: Modifying server settings with SCP (Server Configuration Profiles)